How To Calculate Coefficient Of Determination

Correlation doesn't necessarily equal causation, but finding a correlation between two variables in an experiment is still a very important clue as to the relationship between them. That's why tests for correlation are one of the most common types of statistical test used in science, with the most well-known being Pearson's correlation coefficient.

However, the coefficient of determination is arguably more important because it tells you the proportion of the variation in one variable that can be predicted based on the other. That's why learning to perform the coefficient of determination calculation is important for anybody working with correlation-based statistics.

What Is the Coefficient of Determination?

What Is the Coefficient of Determination?

A basic coefficient of determination definition is that it is the square of Pearson's correlation coefficient, r, and so it is often called R2.

Pearson's coefficient measures correlations, where an increase in one variable either accompanies an increase in another (a positive correlation) or a decrease in it (a negative correlation). The value for r can be anything between −1 and +1, with the magnitude of the number telling you the strength of the correlation and the sign telling you whether it is a positive or a negative correlation.

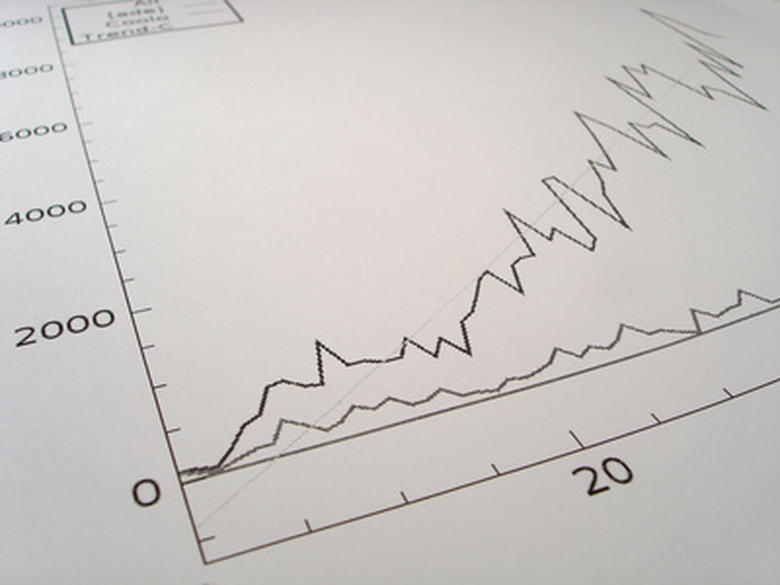

R2 is the square of this measure, so it varies between 0 and 1, and it tells you the percentage of the variation in one variable that can be predicted by the correlated variable. This is useful for many things, particularly building mathematical models for predictive purposes.

Coefficient of Determination Calculation

Coefficient of Determination Calculation

The process of calculating the coefficient of determination is therefore basically the same as the process of calculating Pearson's correlation coefficient, except at the end you square the result. The formula for Pearson's correlation coefficient is:

\(r=\frac{n\sum xy -\sum x \sum y }{\sqrt{(n\sum x^2 -(\sum x)^2)-(n\sum y^2 -(\sum y)^2)}}\)

There are some key pieces of information you need to work through this (admittedly scary-looking!) formula: your x and y values for each observation (i.e. your two variables), the sum of your x and y values, the sum of each x variable multiplied by the corresponding y variable, and the sums of each x and y variable squared.

A convenient way to work this out is to use a spreadsheet program like Microsoft Excel, with columns for x, y, xy, x2 and y2 and sums at the bottom for each column. You'll also need a value for n, the size of your sample (each of which has an x and a y value).

Run through the process indicated by the formula. First, take n multiplied by the sum of your xy values, and then subtract the sum of x values multiplied by the sum of y values.

Divide this whole result by the bottom section: n times the sum of the squares of your x values, minus the sum of x values squared, all multiplied by the result of the same thing for your y values, finally taking the square root before performing the division. This gives you r, which you simply square to obtain R2.

Interpreting the Coefficient of Determination

Interpreting

the Coefficient of Determination

The coefficient of determination is a number between 0 and 1, which can be converted to a percentage by multiplying by 100. The standard coefficient of determination interpretation is the amount of variation in y that can be explained by x, in other words, how well the data fits the regression model you're using describe it.

However, it's important to note the usual caveats present in data based on correlations. It's entirely possible for two variables to be correlated without being causally related.

For example, take the relationship between the use of hearing aids and the number of wrinkles on your skin. There is a strong correlation between the two but of course both are really caused by old age. This isn't a flaw with the approach so much as a limitation you have to take into account to interpret the results correctly.

References

- Byju's: Coefficient of Determination

- Penn State University: Coefficient of Determination and Correlation Examples

- Stat Trek: Coefficient of Determination

- Statistics How To: Coefficient of Determination (R Squared): Definition, Calculation

- Corporate Finance Institute: What is The Coefficient of Determination?

- Statistics How To: Correlation Coefficient: Simple Definition, Formula, Easy Steps

Cite This Article

MLA

Johnson, Lee. "How To Calculate Coefficient Of Determination" sciencing.com, https://www.sciencing.com/calculate-coefficient-determination-6399202/. 14 February 2020.

APA

Johnson, Lee. (2020, February 14). How To Calculate Coefficient Of Determination. sciencing.com. Retrieved from https://www.sciencing.com/calculate-coefficient-determination-6399202/

Chicago

Johnson, Lee. How To Calculate Coefficient Of Determination last modified March 24, 2022. https://www.sciencing.com/calculate-coefficient-determination-6399202/