How To Calculate SSE

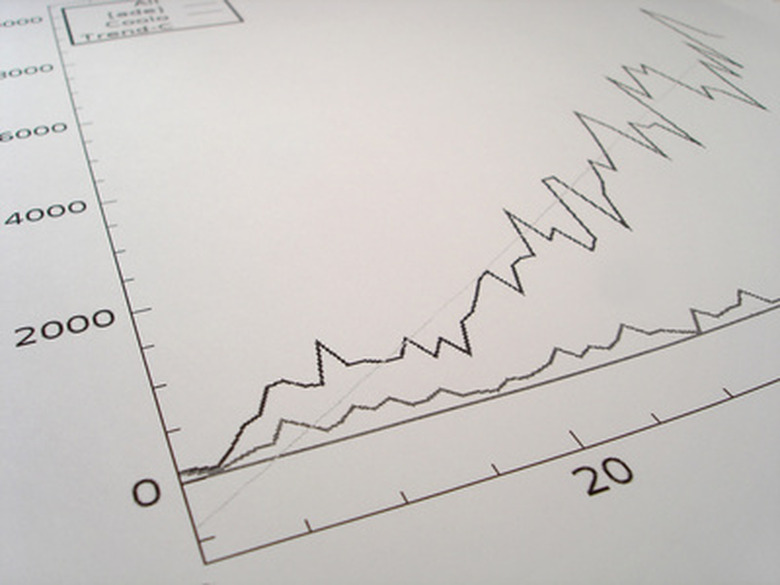

When fitting a straight line to a set of data, you may be interested in determining how well the resulting line fits the data. One way to do this is to calculate the sum of squares error (SSE). This value provides a measure of how well the line of best fit approximates the data set. The SSE is an important for the analysis of experimental data and is determined through only a few short steps.

Step 1

Find a line of best fit to model the data using regression. The line of best fit has the form y = ax + b, where a and b are parameters that you need to determine. You can find these parameters using a simple linear regression analysis. For example, assume the line of best fit has the form y = 0.8x + 7.

Step 2

Use the equation to determine the value of each y-value predicted by the line of best fit. You can do this by substituting each x-value into the equation of the line. For example, if x is equal to 1, substituting that into the equation y = 0.8x + 7 gives 7.8 for the y-value.

Step 3

Determine the mean of the values predicted from the line of best fit equation. You can do this by summing up all the y-values predicted from the equations, and dividing the resulting number by the number of values. For example, if the values are 7.8, 8.6 and 9.4, summing these values gives 25.8, and dividing this number by the number of values, 3 in this case, gives 8.6.

Step 4

Subtract each of the individual values from the mean, and square the resulting number. In our example, if we subtract the value 7.8 from the mean 8.6, the resultant number is 0.8. Squaring this value gives 0.64.

Step 5

Sum all the squared values from Step 4. If you apply the instructions in Step 4 to all three values in our example, you will find values of 0.64, 0 and 0.64. Summing these values gives 1.28. This is the sum of squares error.

Warning

The numbers from the data are only used to determine the equation for the line of best fit. Use values from the line of best fit when calculating the sum of squares error.

Cite This Article

MLA

Bourdin, Thomas. "How To Calculate SSE" sciencing.com, https://www.sciencing.com/calculate-sse-7479121/. 24 April 2017.

APA

Bourdin, Thomas. (2017, April 24). How To Calculate SSE. sciencing.com. Retrieved from https://www.sciencing.com/calculate-sse-7479121/

Chicago

Bourdin, Thomas. How To Calculate SSE last modified March 24, 2022. https://www.sciencing.com/calculate-sse-7479121/