Water Vapor Pressure Vs. Humidity

You may sometimes hear weather forecasters, scientists and engineers talk about humidity using a variety of terms — such as relative humidity, vapor pressure and absolute humidity. All of these are just different ways to talk about the amount of water vapor in the air. Understanding what each of them means will help you avoid confusion.

Vapor Pressure

Vapor Pressure

If you put some water in a closed container, the water will start to evaporate. As the concentration of water vapor increases, so too does the rate at which water vapor condenses on the sides of the container and forms drops. Eventually the rate of condensation and the rate of evaporation are the same, so the concentration of water vapor ceases to change. This point is called an equilibrium, and the pressure of the water vapor at equilibrium is called the equilibrium or saturation vapor pressure. The pressure of water vapor in the air at any given moment is the actual vapor pressure. Vapor pressure is measured using the same units used to describe pressure. Common units for pressure include the bar, which is roughly equivalent to atmospheric pressure at sea level, and the Torr, which is equivalent to atmospheric pressure at sea level divided by 760. In other words, atmospheric pressure at sea level is 760 Torr.

Relative Humidity

Relative Humidity

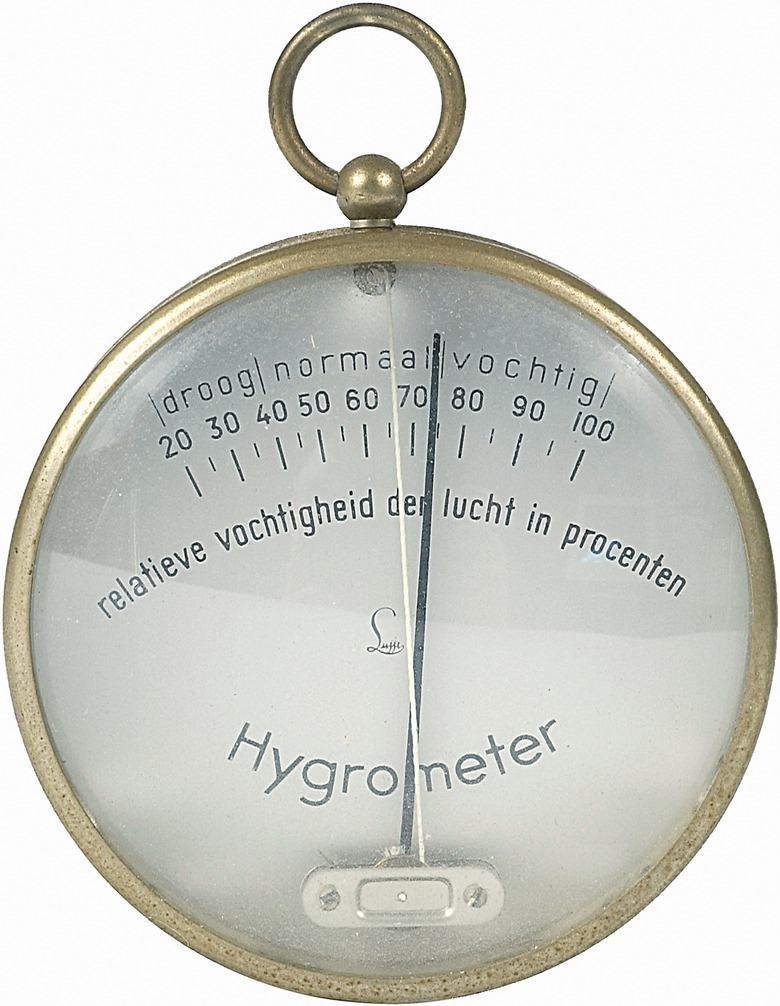

Many times, the air is nowhere near saturated with water vapor. In other words, the actual vapor pressure is usually much lower than equilibrium vapor pressure. So relative humidity measures how much water the air currently contains as compared to what it would contain if saturated. If the amount of water in the air is just half of the saturation amount, for example, the relative humidity is 50 percent. Relative humidity is useful because it determines your level of comfort — how wet or dry the air "feels."

Absolute Humidity

Absolute Humidity

Absolute humidity is probably the simplest way to think about water vapor. It just measures the amount of water vapor per unit volume of air — how many grams of water vapor are present in a cubic meter of air. Vapor pressure as used by scientists and engineers measures how much water vapor the air would contain if saturated; absolute humidity, by contrast, measures how much water vapor it actually contains, and relative humidity compares the two. The units for absolute humidity are grams of water vapor per cubic meter of air.

Dew Point

Dew Point

Relative humidity and equilibrium vapor pressure depend on temperature. As the temperature increases, the equilibrium vapor pressure also increases, so unless the amount of water vapor present in the air rises too, the relative humidity goes down. Dew point is a measure of relative humidity that's independent of temperature, and that's why it's often used by meteorologists. If you take the air and cool it down without changing its water content, at some point the actual vapor pressure exceeds the equilibrium vapor pressure and water starts to condense on leaves and the ground in the form of dew. The temperature at which this happens is called the dew point.

Cite This Article

MLA

Brennan, John. "Water Vapor Pressure Vs. Humidity" sciencing.com, https://www.sciencing.com/water-vapor-pressure-vs-humidity-19402/. 24 April 2017.

APA

Brennan, John. (2017, April 24). Water Vapor Pressure Vs. Humidity. sciencing.com. Retrieved from https://www.sciencing.com/water-vapor-pressure-vs-humidity-19402/

Chicago

Brennan, John. Water Vapor Pressure Vs. Humidity last modified March 24, 2022. https://www.sciencing.com/water-vapor-pressure-vs-humidity-19402/