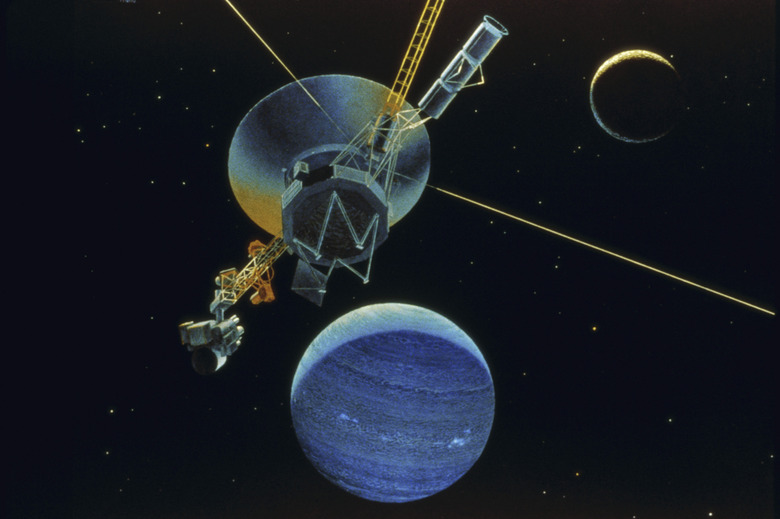

What Is The Wind Speed On Neptune?

On Earth, the sun's energy drives the winds; so on Neptune, where the sun appears not much larger than a star, you would expect weak winds. However, the opposite is true. Neptune has the strongest surface winds in the solar system. Most of the energy fueling these winds comes from the planet itself.

Winds on the Gas Giants

Winds on the Gas Giants

When compared with any of the gas giant planets, Earth's atmosphere is a pool of serenity. On Jupiter, winds in the Little Red Spot reach 618 kilometers per hour (384 miles per hour), which is almost twice as fast as winds in the fiercest terrestrial hurricane. On Saturn, winds in the upper atmosphere can blow almost three times harder than that, at 1,800 kilometers per hour (1,118 miles per hour). Even these winds take a back seat to those near Neptune"s Great Dark Spot, which astronomers have clocked at 1,931 kilometers per hour (1,200 miles per hour).

An Energy Generator

An Energy Generator

Like Jupiter and Saturn, Neptune generates more energy than it receives from the sun, and this energy radiating from the planet's core is what drives the strong surface winds. Jupiter radiates energy left over from its formation, and the energy that Saturn radiates is largely the result of friction produced by helium rain. On Neptune, a blanket of methane — which is a greenhouse gas — traps heat. If the planet were like Uranus (which lacks an internal energy source), that heat would have radiated into space long ago. Instead, even though temperatures are frigid, the planet radiates 2.7 times more heat than it receives from the sun, which is enough to drive its ferocious winds.

Cite This Article

MLA

Deziel, Chris. "What Is The Wind Speed On Neptune?" sciencing.com, https://www.sciencing.com/what-wind-speed-neptune-4727681/. 24 April 2017.

APA

Deziel, Chris. (2017, April 24). What Is The Wind Speed On Neptune?. sciencing.com. Retrieved from https://www.sciencing.com/what-wind-speed-neptune-4727681/

Chicago

Deziel, Chris. What Is The Wind Speed On Neptune? last modified March 24, 2022. https://www.sciencing.com/what-wind-speed-neptune-4727681/